|

While the three Vs provide a foundational understanding, the complexity of Big Data is further encapsulated by: Variety: The different types of data, including structured, semi-structured, and unstructured data.Velocity: The speed at which new data is produced and collected.Volume: The sheer quantity of data generated.The three Vs often characterize Big Data: The creation of Big Data can be attributed to the exponential growth of data from various sources like social media, IoT devices, e-commerce platforms, and more. But what exactly is Big Data, and why has it become the cornerstone of modern analytics, especially in ETL and data warehousing? Defining Big DataĪt its core, Big Data refers to the enormous volumes of data that can't be processed effectively with traditional apps. Overview of Big Data and Data LakesīigData has become synonymous with the ever-growing amounts of daily information businesses and individuals generate. In the broader context of data warehousing and analytics, ETL tools are not just facilitators they are enablers, empowering businesses to harness the true potential of their data. Their integration with contemporary data warehousing solutions ensures businesses have a seamless data pipeline from data extraction to insights generation. In addition, with the rise of cloud computing, many ETL tools are now cloud-native, ensuring scalability, flexibility, and cost-efficiency. They now offer capabilities for stream processing, allowing businesses to process data in real-time, and machine learning integrations to predict trends and anomalies. Beyond these core functionalities, modern ETL tools are embracing the challenges posed by big data and real-time analytics.

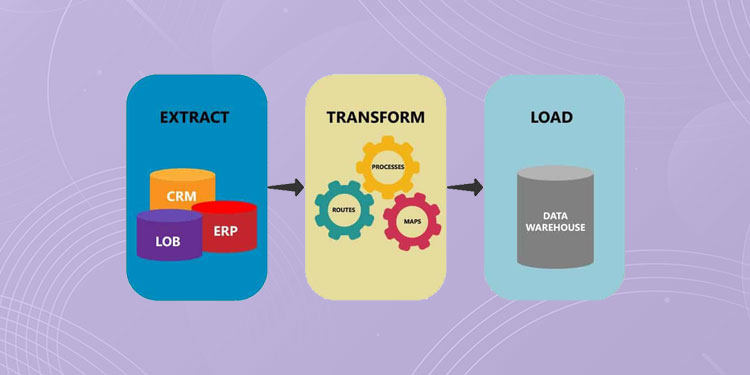

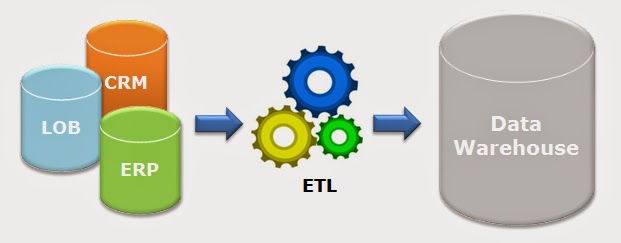

Here, data undergoes rigorous cleansing to remove anomalies, enrichment to augment its value, and structuring to make it suitable for analytical endeavors. However, their real prowess is showcased during the transformation phase. These tools can extract data from many sources, be it traditional relational databases, NoSQL systems, or cloud-based platforms like Amazon and AWS. The Basics of ETL Tools and ETL PipelinesĮTL tools, often used in conjunction with SQL, are foundational pillars in data engineering designed to address the complexities of data management. The Basics of ETL Tools and ETL Pipeline.The importance of data cleansing, validation, and the use of a staging area before loading data into the target data warehouse. The undeniable benefits of ETL tools in ensuring data quality, deduplication, and consistency. The debate between cloud-based ETL tools and open-source alternatives.

The technicalities of ETL processes and their significance in big data analytics. The role of OLAP in modern data warehousing. The distinction between ETL and ELT and their respective advantages. Here are the key things you need to know about ETL and Data Warehousing:

This article breaks down ETL and data warehousing, providing insights into the tools, techniques, and best practices that drive modern data engineering. As businesses generate large amounts of data from different sources, efficient data integration and storage solutions become crucial. Understanding ETL (extract, transform, and load) and data warehousing is essential for data engineering and analysis.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed